Steps vs PITA: what's the difference?

Whilst Insight offers near limitless possibilities in terms of the type and format of data you can store, when it comes to recording teacher assessment there are essentially two broad approaches: Steps and 'Point In Time Assessment' (or PITA).

So, what's the difference?

Steps

Steps are like levels: a hierarchical system that pupils progress through as they learn and secure more of the curriculum. Each step will usually be assigned a point value and an expected rate of progress will be set with extra points gained by pupils catching up or mastering content. In the aftermath of the removal of levels, these tended to stick to the familiar '3 points per year/point per term' approach, but that has proliferated into more granular systems offering up to 10 points per year. A common version involves pupils beginning the year classified as 'emerging', moving on to 'developing' mid-year, before arriving at 'secure' later on. Commonly, there will be a final band for 'greater depth, which awards an extra point. Insight can easily accommodate steps-based approaches and many schools have implemented variations on this theme.

PITA

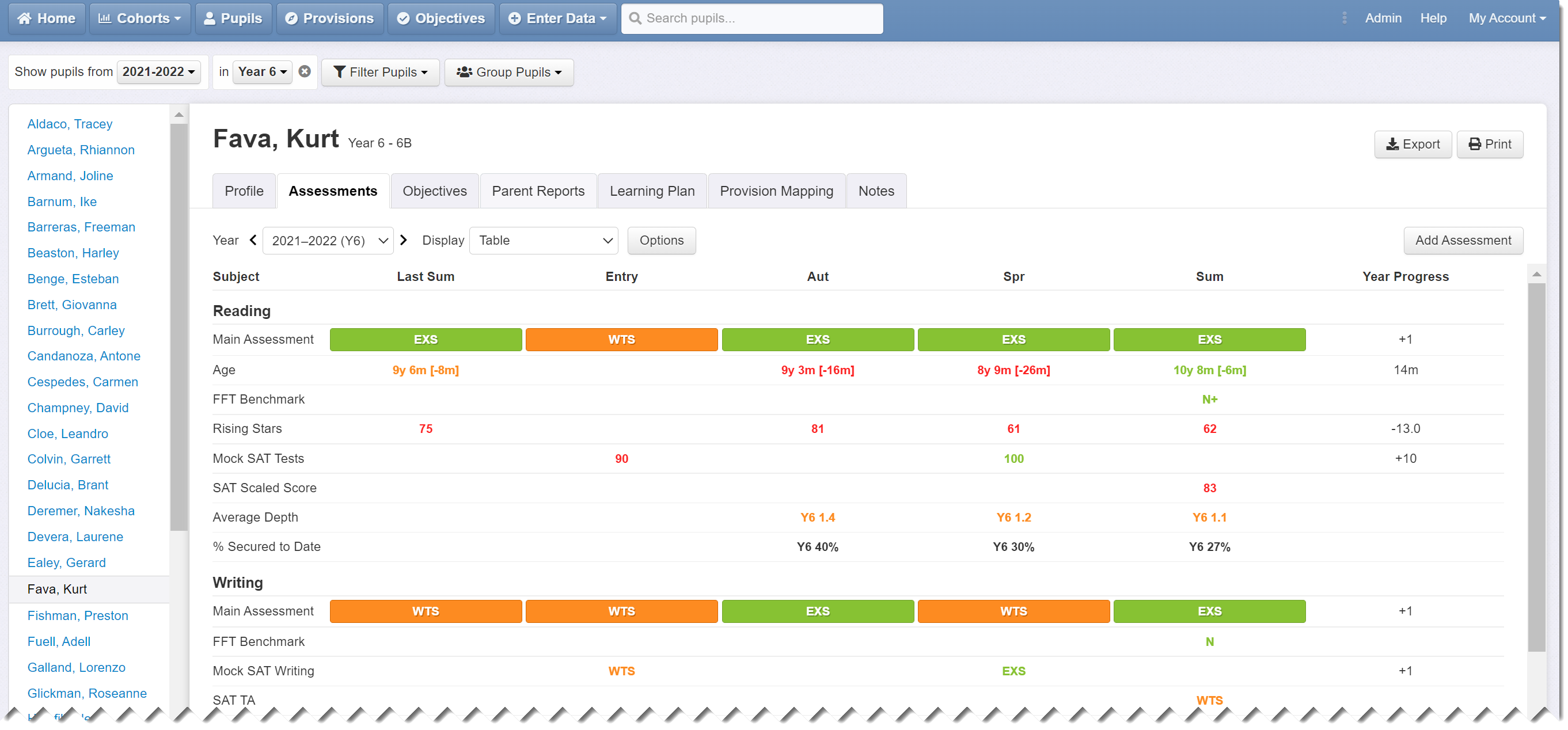

Unlike the hierarchical nature of steps, PITA is more horizontal with pupils often staying in the same band throughout their time at school. Essentially, with a PITA-style approach, teachers are stating whether pupils are keeping pace with the demands of the curriculum, or perhaps are 'on track' to meet expectations by the end of the year. The assessment therefore reflects the pupils’ security in the curriculum at that point in time. Commonly, schools use four assessment bands - e.g. working below, working towards, expected, and greater depth (or variations on that theme) - but can have more if desired. Unlike steps-based approaches, pupils do not start the year in the first band and move up. Instead, they might be 'expected' in the autumn and throughout if they are keeping pace but could move up or down a band depending on how they progress. It should also be noted that a pupil who finishes the year at 'greater depth' will most likely be 'greater depth' at the start of the following year unless issues arise. Again, Insight can accommodate PITA-style approaches. Users can have any number of bands and can name them as they wish.

Example of PITA bands in Insight: note the pupil that assessments can go down as well as up, reflecting the pupil's security in the curriculum at that point. Pupils can be greater depth at the start of the year and then drop later on if they begin to struggle with new content. Many will stay in the same band all year.

What are the pros and cons?

A steps-based approach involves a numerical progress measure and an expected rate; a PITA-style approach does not. For many schools, a progress measure is important but is becoming increasingly less so since Ofsted took the decision to disregard a school's internal data. With a PITA-style approach what you lose in terms of progress measures, you gain in terms of clarity. Often, with steps, the majority of pupils are classified as, for example, 'emerging' in the autumn term, which is not very informative. With PITA, on the other hand, at any point in time, you will be able to see which pupils are working below, struggling, coping well, or attaining high standards. It means that assessment is always directly comparable with prior attainment and future expectations because it uses a common language. It also gets around the issue of the changing terminology and shift in meaning associated with steps-based approaches where, for example, emerging equates to expected in autumn, just below in Spring, and well below in Summer. The other issue with steps is how to define them. Often this will involve thresholds based on curriculum coverage e.g. 33% by end of the autumn, which may or may not be realistic depending on subject, year group, and curriculum design and teaching. PITA also gets away from the implication of linear progression. We need to consider whether a pupil that finishes consecutive years as 'emerging' has really made the same amount of progress as a pupil that ends each year classified as secure. Often, steps-based approaches will award both cases the same number of points, but is that the case in reality?

Most schools now prefer a PITA approach because it's clear and simple, aligns with KS1 and KS2 assessment, matches the language used in the classroom, and makes reporting to audiences such as governors much more straightforward.

Reporting progress in Insight

Steps usually have an expected rate of progress, which can be set in a progress overview report. Pupils that make that progress are colour-coded green, those that make more are blue, those that make less are red. Steps do not work so well in progress matrices.

PITA is better suited to progress matrices where assessments share a common language and at any point can be compared with prior attainment or future targets. They also work in progress overviews in a similar way to value added (VA): pupils that maintain their band are score 0 (green), those that move up a band are assigned a score of +1 (blue); those that move down a band are scored -1 (red).

To summarise...

Steps are hierarchical like levels. They provide a progress measure but can lack clarity, especially earlier in the year, and the thresholds that define them may be arbitrary. Steps are well suited to Progress Overviews in Insight but work less well in Progress Matrices.

PITA is non-hierarchical. Pupils often remain in the same band throughout and can be 'expected' or 'greater depth' in autumn. They do not provide a progress measure like steps, but can be used to generate a simple VA-style relative progress score in Progress Overviews. They are well suited to progress matrices and tend to provide better clarity and comparability.